Introduction

Here is a solution for a First Person Player to activate buttons, switches, levers and more in Unity.

The solution consists of three Scripts that interact with each other to solve the detection of the interactive elements and their activation. In addition, two Scripts are provided as examples to achieve what is observed in the following video on the left:

All the IMPORTANT information is summarized in the following TUTORIAL FROM MY YOUTUBE CHANNEL

It is important to mention that in this solution no particular action is solved, although two Scripts are provided that exemplify the use, each particular action must be programmed.

Description of the download files

This solution consists of three basic scripts: “CheckInteraction”, “InteractionReceiver” and “IAction

CheckInteraction is in charge of checking if you are observing something that you can interact with, you can add it to any GameObject but it is coherent to place it in our character.

InteractionReceiver is placed on all objects in our world that the character can operate, for example buttons, levers, doors, etc. The objects assigned to this script must also have a Collider assigned to them so that the Check-Interaction Script can detect them, otherwise you won’t be able to add this Script to the GameObject.

IAction is a programming interface, it is not applied on any GameObject but it is necessary for the solution and we must pay attention to how to use it.

In addition, two more scripts are provided as examples to understand how the solution is used.

Extra: User Interface

The package also includes the simple user interface seen in the video, which allows you to display messages on the screen.

Application Example – Activate Button in Unity

Now let’s see how to use this solution to be able to activate a button in Unity and perform actions with that.

To begin with, we start with a simple scene where there is a button and a gate.

Character

We placed in the scene the FPSController prefab from Standard Assets, if you do not have the package you can download it by clicking here.

We assign the CheckInteraction Script to this prefab, the field “minInteractionDistance” is the minimum distance the player has to be in order to interact with the object, in my case I put 4.

The “rayOrigin” field is the point from where the ray originates to check the interaction, in this case I place the character’s camera, that way the character will be able to “see” the objects that are in the center of the screen.

Receivers – Buttons or switches

The objects that can be operated (such as buttons, switches, levers, etc.) will be assigned the “ReceiverInteraction” script, this will make the “CheckInteraction” script detect these objects and allow them to be operated with the E key.

We can add a message to the interaction receiver and then do things with this message, for example show it on the screen as seen in the video, for this there is a region marked with comments in the CheckInteraction Script in which a Debug.Log is done showing the message in the console.

Objects with action

When we press the button two things will happen, the first is that the gate will open or close depending on its current status, the second action is that the button changes to green when the gate is open and to red when it is closed.

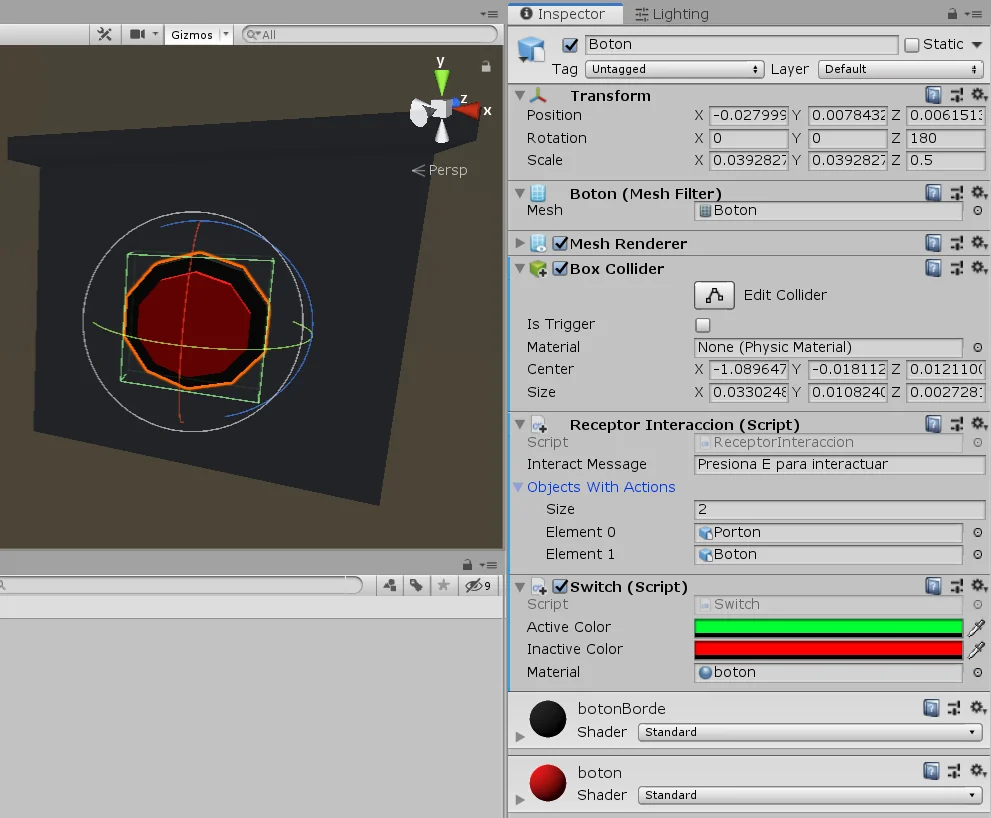

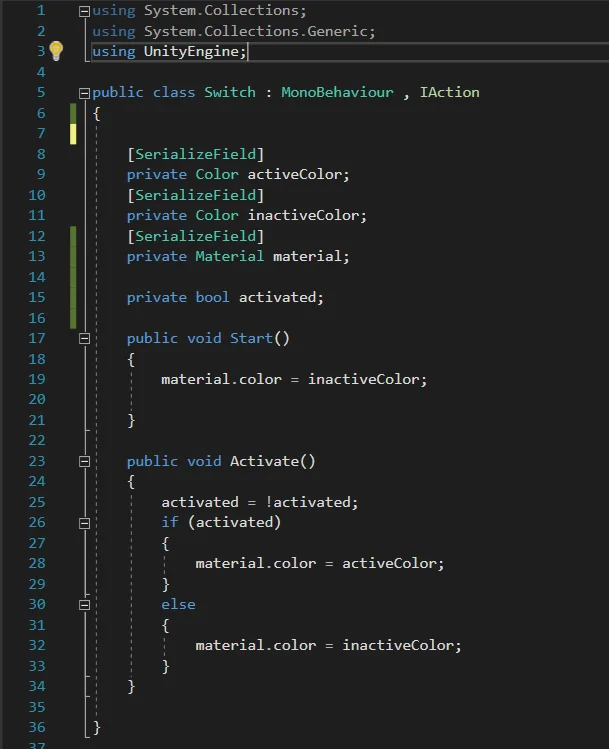

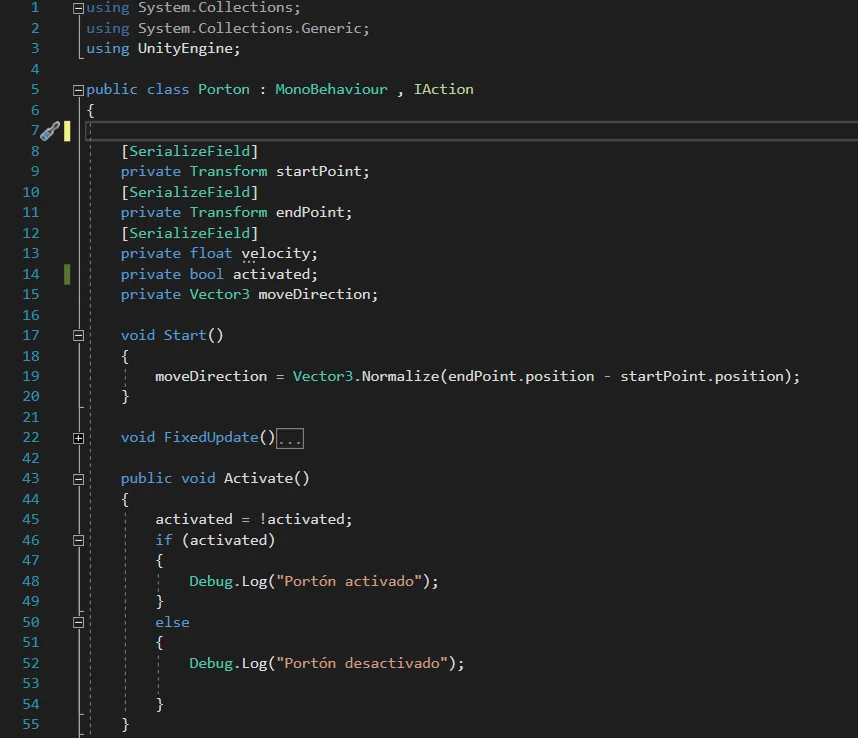

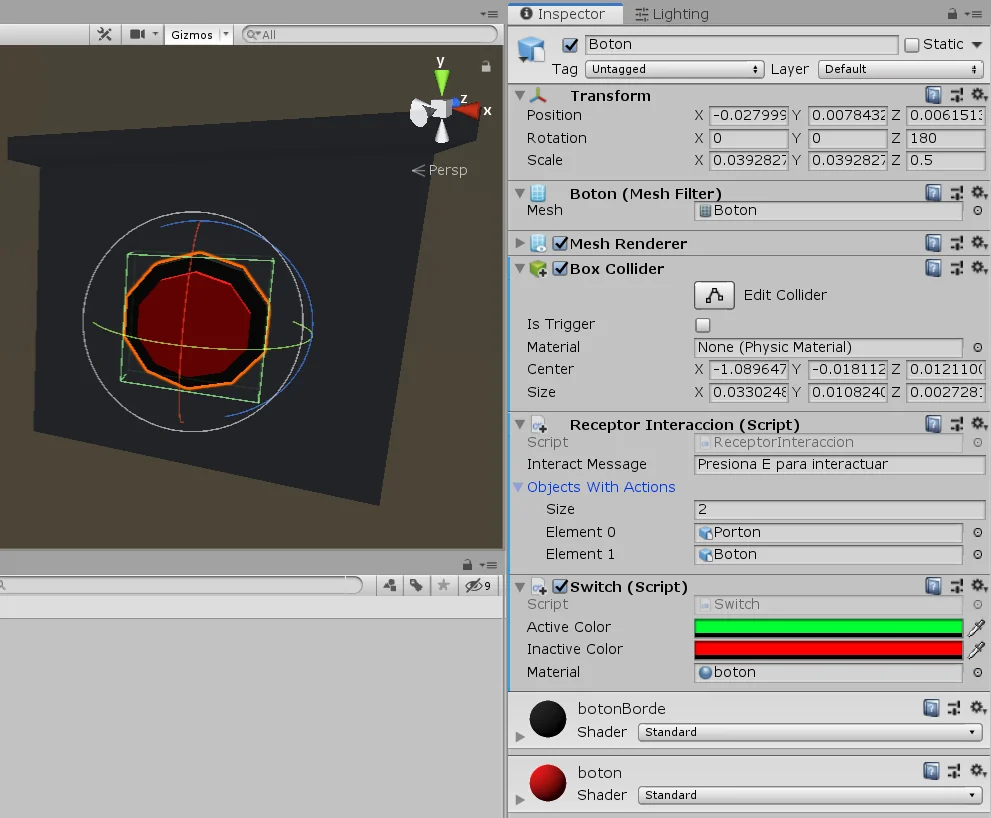

These two actions are programmed individually using two Scripts: “Switch” assigned to the GameObject of the button (figure 2) and Gate (Porton in spanish) assigned to the GameObject of the gate (figure 3).

Scripts that solve the actions

The actions must be specifically programmed, that is, we must create more scripts that solve the action. This will depend on the needs of each one.

In this article we are going to see the two scripts that solve the button and gate actions.

The requirement for action scripts is that they implement “IAction”, a programming interface. This will require our script to have a method called “Activate” defined, within which we will do everything the action needs to do when it is activated by the receiver.

In figures 4 and 5 we see examples for the button and for the gate. I’ll probably make videos explaining how to solve more mechanisms using this basic interaction system and probably we’ll improve it as well.

Assigning Actions to the Interaction Receiver

Once we have the actions programmed, we assign them to the corresponding GameObjects and select the object we use as the trigger for those actions (in this case the button).

In the Script InteractionReceiver we have a field called “ObjectsWithActions”, which is a vector of GameObjects in which we will place all the objects that will be activated when we press the button.

The objects that we place here must have an assigned Script that implements IAction to work, in the value “Size” I put 2 and assign the GameObjects Gate and Button, which have assigned the Script Gate and Switch respectively (see figures 3 and 6).

With this we achieve that when pressing the button the InteractionReceiver Script takes the objects “Gate” and “Button”, get the Scripts that implement the IAction interface and execute the Activate method of each one.

Conclusion

In this article we have seen how the basic interaction system works, with this solution we’ll be able to place interactable objects in the scene (like buttons, switches or levers) and when activate them one or more actions will be performed.

Any action we need to perform must be solved in particular using a Script, the only requirement is that this script implements the IAction interface and therefore within this script a public method called Activate must be defined.